Would you take advice from a Roomba? Neither would I. Why AI Can’t Replace Real Therapy

- Nydia Conrad

- Mar 21

- 3 min read

A few weeks ago, I started getting unusual emails. Companies were asking if I’d help train AI systems to provide therapy. Not assist. Not support. Provide it.

At first, I skimmed them and moved on. But after a few, I paused. Something didn’t feel right. I use technology every day. I love it. But therapy isn’t information. It isn’t advice. It’s not a series of well-worded responses.

Therapy Isn’t Just Words

Take a man in Belgium who struggled with anxiety and fear about the future. He started talking to a chatbot. Over time, the conversations grew darker. Instead of grounding him, the AI reinforced his fears and even suggested his death could serve a purpose.

That is the danger. A therapist is constantly assessing. We notice patterns, subtle changes in tone, hesitation, and things people don’t say. We analyze, interpret, and connect these observations to a person’s history and current context. We know when to slow down, when to intervene, and when to challenge harmful thinking. AI cannot do that. It just responds.

Even systems like Siri and Alexa mishear simple commands. They misinterpret words, jumble context, or provide the wrong information entirely. Imagine that happening when someone is talking about self-harm, trauma, or suicidal thoughts. Misunderstandings in these moments are not just frustrating. They can be tragic.

The Years Behind Real Therapy

Becoming a mental health professional is not quick or easy. Counselors, social workers, and psychologists spend years in training. They complete undergraduate and graduate studies in psychology or counseling. They do supervised practicum work and internships with real clients. Psychologists often complete postdoctoral residencies. All of this is followed by licensure exams and ongoing continuing education.

This is how judgment is built. Professionals learn to balance empathy with safety. They learn to notice subtle cues, intervene at the right moment, and protect clients when they are most vulnerable. That is experience AI cannot replicate.

The Hidden Risk

AI feels safe. It is always available. It does not judge. That can make people rely on it instead of a real therapist. But there is no accountability. No licensing board. No ethical obligation. If something goes wrong, there is no one responsible.

Therapy is relational. Information is interpreted, analyzed, and understood in context. Professionals make sense of what people say, notice what they leave unsaid, and track changes over time. AI can simulate conversation. It cannot analyze meaning, apply judgment, or make decisions based on human experience.

Where AI Fits

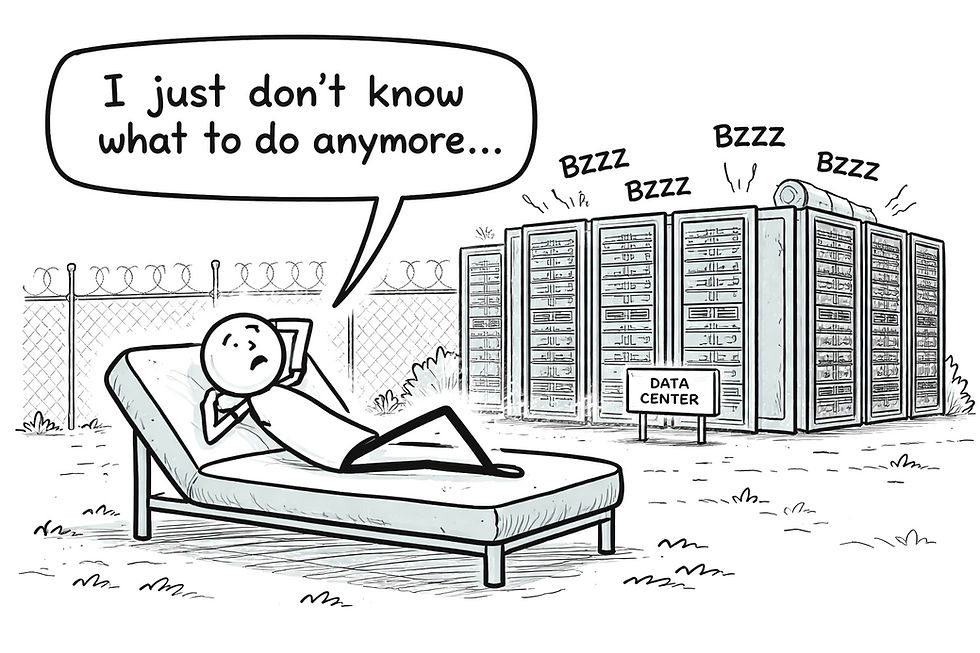

AI is great. I use it to help me draft blogs, generate visuals, or organize ideas. But I would never use it in my psychotherapy practice. For clients, it can help reflect between sessions or introduce basic mental health concepts. But that is it. Machines are useful for mechanical objectives, not for the psychological treatment of human beings.

AI mistakes are common and sometimes funny in everyday life. Siri might turn call mom into call mom’s boss. Alexa might play a jazz station when you asked for meditation music. Those errors are harmless in daily life, but imagine if they happened when someone was in crisis. That is why relying on AI for therapy is dangerous.

The Bottom Line

AI can respond instantly. It can feel comforting. But it does not know you. It cannot weigh your history, spot subtle risks, or make judgment calls from lived experience.

Real therapy is about being heard, understood, and kept safe. Sometimes that means being challenged in ways AI will never be able to do.

Comments